|

What ends up happening in gradient descent is that we start from some random initial point and we try to move in the 'gradient direction' in order to decrease the cost function. So let's say we want to minimize a cost function. It is often used when the optimum point cannot be estimated in a closed form solution. If your problem is small that it can be efficiently solved by an off-the-shelf least squares solver, you should probably not do gradient descent.īasically the 'gradient descent' algorithm is a general optimization technique and can be used to optimize ANY cost function.

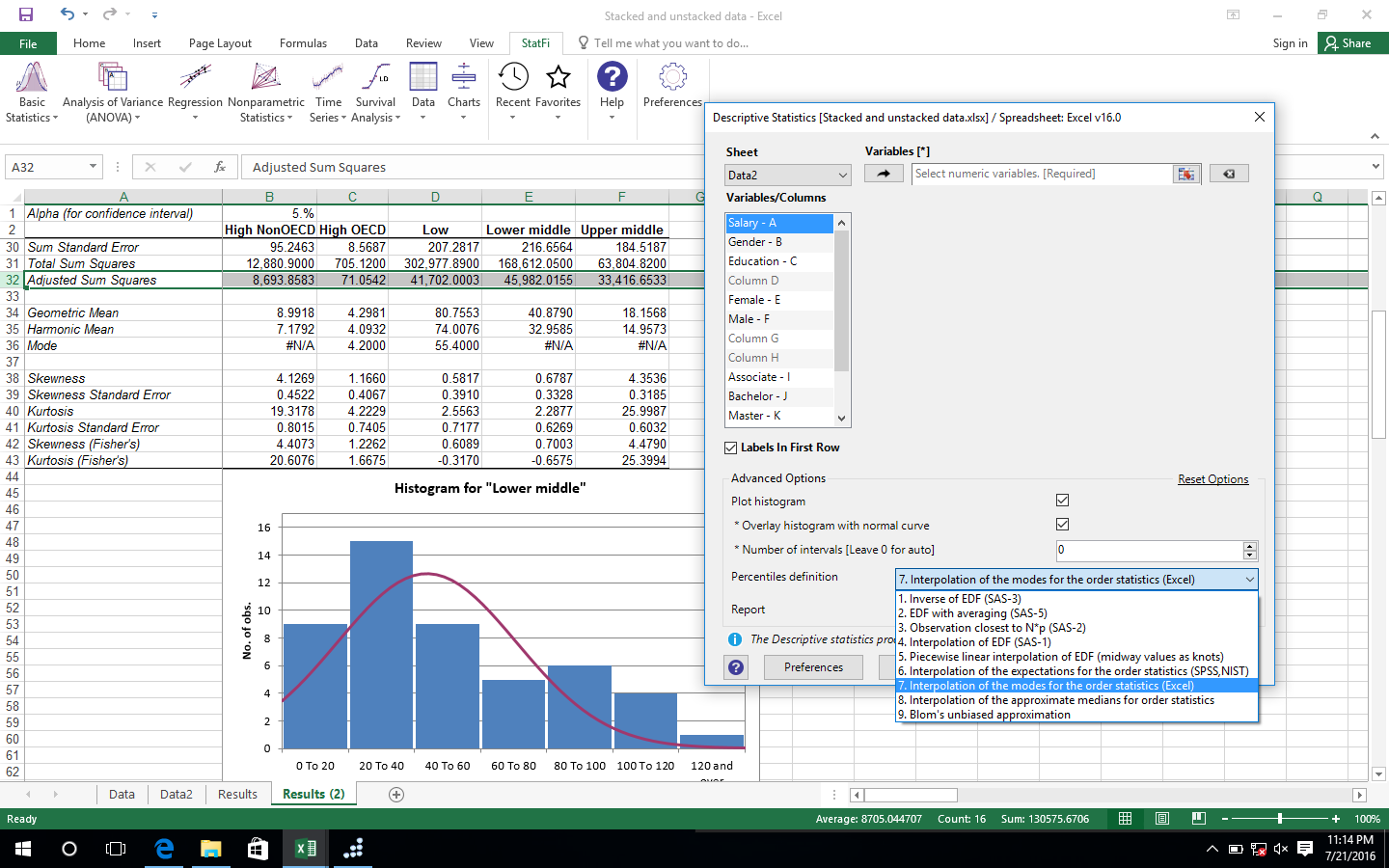

If the number of data points is very hight, using a standard least squares solver might be too expensive, and (stochastic) gradient descent might give you a solution that is as good in terms of test-set error as a more precise solution, with a run-time that is orders of magnitude smaller (see this great chapter by Leon Bottou ) There are standard least square solvers which could be used instead of gradient descent (and often are). Usually the problem is formulated as a least square problem, which is slightly easier. In that case, you need to invert a matrix to use their simple approach, which can be hard or ill-conditioned. The example you gave is one-dimensional, which is not usually the case in machine learning, where you have multiple input features. My question is, is gradient descent the preferred method for fitting linear models? If so, why? Or did the professor simply use gradient descent in a simpler setting to introduce the class to the technique? Why is this seemingly more simple technique not used in machine learning? In some statistics classes, I have learnt that we can compute this line using statistic analysis, using the mean and standard deviation - this page covers this approach in detail. In some machine learning classes I took recently, I've covered gradient descent to find the best fit line for linear regression. Upgrade now to Pro version and get over 70 features and multi-platform compatibility.Question: Why do we use gradient descent in linear regression? StatPlus:mac is most affordable solution for data analysis on Mac with Excel. You will benefit from the reduced learning curve and attractive pricing while enjoying the benefits of precise routines and calculations. Mac/PC license is permanent, there is no renewal charges. Rank correlations (Kendall Tau, Spearman R, Gamma, Fechner).2x2 tables analysis (Chi-square, Yates Chi-square, Exact Fisher Test, etc.).Within subjects ANOVA and mixed models.Post-hoc comparisons - Bonferroni, Tukey-Kramer, Tukey B, Tukey HSD, Neuman-Keuls, Dunnett.One-way and two-way ANOVA (with and without replications).Multiple definitions for computing quantile statistics.Frequency tables analysis (for discrete and continuous variables).Normality tests (Jarque-Bera, Shapiro-Wilk, Shapiro-Francia, Cramer-von Mises, Anderson-Darling, Kolmogorov-Smirnov, D'Agostino's tests).Options to emulate Excel Analysis ToolPak results and migration guide for users switching from Analysis ToolPak.Permanent license and free major upgrades during the maintenance period.'Add-in' mode for Apple Numbers v3, v4 and v5.Standalone spreadsheet with Excel (XLS and XLSX), OpenOffice/LibreOffce Calc (ODS) and text documents support.Free or Premium? Features Comparison - StatPlus:mac Pro vs. Mann-Whitney U Test, Kolmogorov-Smirnov test, Wald-Wolfowitz Runs Test, Rosenbaum Criterion. Unit root tests - Dickey–Fuller, Augmented Dickey–Fuller (ADF test), Phillips–Perron (PP test), Kwiatkowski–Phillips–Schmidt–Shin (KPSS test).Tests for heteroscedasticity: Breusch–Pagan test (BPG), Harvey test, Glejser test, Engle's ARCH test (Lagrange multiplier) and White test.Stepwise (forward and backward) regression.Weighted least squares (WLS) regression.Multivariate linear regression (residuals analysis, collinearity diagnostics, confidence and prediction bands).Wilcoxon Matched Pairs Test, Sign Test, Friedman ANOVA, Kendall's W (coefficient of concordance). Kaplan-Meier (log rank test, hazard ratios).

Receiver operating characteristic curves analysis (ROC analysis).ĪUC methods - DeLong's, Hanley and McNeil's.LD values (LD50/ED50 and others), cumulative coefficient calculation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed